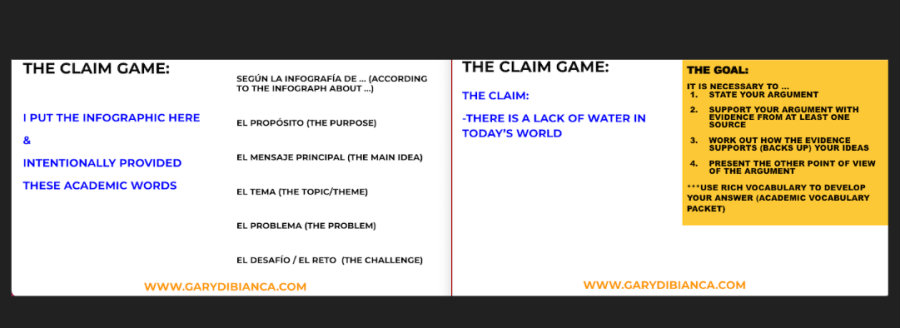

I am happy to report that the coffee commercial that I wrote about last week has been a great source of Input for my students as we continue to follow this year’s Winter Olympics. I wanted to say that I have updated the resources. I published last week’s post/resources quickly because I wanted us all …

Continue reading Updates for Video Clip Chat: the Coffee Run and A New Class Extension Writing Game